CTDB database design: Difference between revisions

(Created page with "= Database models = CTDB currently provides 3 types of databases. == Volatile == * Used to hold temporary Samba state * Cleared when the first Samba process attaches * Dis...") |

(→Technical summary: Add diagram) |

||

| Line 111: | Line 111: | ||

: Sent by CTDB from the LMASTER to tell a node that it is now DMASTER for a record |

: Sent by CTDB from the LMASTER to tell a node that it is now DMASTER for a record |

||

Here is a diagram showing the packet flow: |

|||

[[Image:CTDB_migration_new_1.png|350px|]] |

|||

([[:File:CTDB_migration_new_1.svg|source]]) |

|||

There are 2 simplification possible: |

|||

* If B is DMASTER as well as LMASTER then the diagram can be truncated to the sequence 1 → 2 → 5 → 6 |

|||

* If A is already DMASTER then the diagram can be further truncated to the sequence 1 → 6 |

|||

Latest revision as of 02:31, 4 May 2018

Database models

CTDB currently provides 3 types of databases.

Volatile

- Used to hold temporary Samba state

- Cleared when the first Samba process attaches

- Distributed across cluster nodes

- Can be stored in volatile storage, such as a tmpfs

Persistent

- Used to hold permanent Samba state

- Reside on permanent storage

- Replicated across cluster nodes

- Synced to disk during each transaction

Replicated

- Used to hold temporary CTDB state

- Cleared when the first CTDB process attaches

- Replicated across cluster nodes

- Can be stored in volatile storage, such as a tmpfs

- Faster writing that persistent databases with less safety

Distributed database design

Distributed databases have the following attributes:

- Fast to write a record because it is written locally

- Quite fast to read because a limited number of nodes are involved

- Slow to traverse because the data is distributed

Record distribution and migration concepts

CTDB uses 2 (relatively :-) simple concepts for doing the distribution:

- DMASTER (or data master)

- This is the node that has the most recent copy of a record.

- The big question is: How can you find this DMASTER? The answer is...

- LMASTER (or location master)

- This node always knows which node is DMASTER.

- The LMASTER for a record is calculated by hashing the record key and then doing a modulo of the number of active, LMASTER-capable nodes and then mapping this to a node number via the VNNMAP.

Let's say you have 3 nodes (A, B, C) and node A wants a particular record. Let's say that node B is the LMASTER for that record.

There are 3 cases, depending on which node is DMASTER:

- DMASTER is A

- smbd will find the record locally. No migration is necessary. The LMASTER is not consulted.

- DMASTER is B

- A will ask B for the record. B will notice that it is DMASTER and will forward the record to A. The record will be updated on both A and B because the change of DMASTER must be recorded.

- DMASTER is C

- A will ask B for the record. B will notice that it is not DMASTER and forward the request to C. C forwards the record to B, which forwards it to A. The record will be updated on A, B and C because the change of DMASTER must be recorded.

You can now add nodes D, E, F, ... and they will not affect migration of the record (if there is no contention for the record from those additional nodes).

Record creation

Record creation is a simplified version of the case where DMASTER is B. In this the record does not exist on A, so A asks B for it. B notices that the record does not exist so it creates an empty record, which is forwarded to A.

Performance problems due to contention

If there is heavy contention for a record then (at least) 2 different performance issues can occur:

- High hop count

- Before C gets the request from node B, C responds to a migration request from another node and is no longer DMASTER for the record. C must then forward the request back to the LMASTER. This can go on for a while. CTDB logs this sort of behaviour and keeps statistics.

- Record migrated away before smbd gets it

- The record is successfully migrated to node A and ctdbd informs the requesting smbd that the record is there. However, before smbd can grab the record, a request is processed to migrate the record to another node. smbd looks, notices that node A is not DMASTER and must once again ask ctdbd to migrate the record. smbd may log if there are multiple attempts to migrate a record.

- Try this

git grep attempts source3/lib/dbwrap

- to get an initial understand of what is logged and what the parameters are. :-)

Read-only and sticky records are existing features that may help to counteract contention.

Technical summary

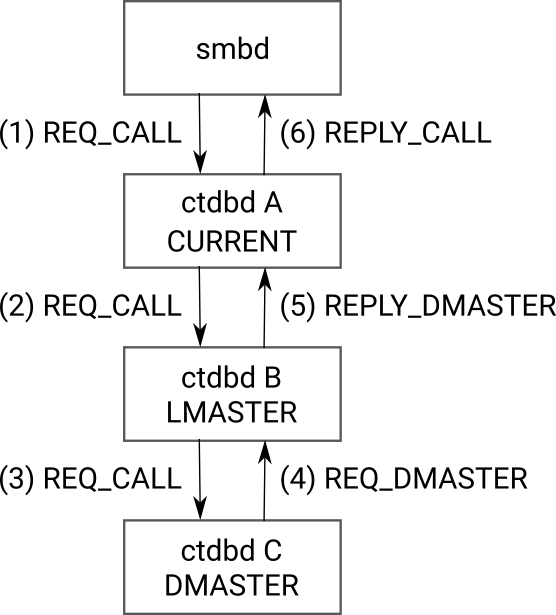

There are 4 packet types used as part of record migration

- REQ_CALL

- Sent by a client to request that CTDB migrates a record

- Forwarded by CTDB to the LMASTER or DMASTER when the record is not local

- REPLY_CALL

- A reply sent by CTDB to tell a client that the request record is now locally available

- REQ_DMASTER

- Sent by CTDB from the current DMASTER to tell the LMASTER that it should hand over DMASTER status for the record to a particular node

- REPLY_DMASTER

- Sent by CTDB from the LMASTER to tell a node that it is now DMASTER for a record

Here is a diagram showing the packet flow:

(source)

There are 2 simplification possible:

- If B is DMASTER as well as LMASTER then the diagram can be truncated to the sequence 1 → 2 → 5 → 6

- If A is already DMASTER then the diagram can be further truncated to the sequence 1 → 6